In an age where seeing is no longer believing, a rapidly evolving technology is quietly undermining the very fabric of public truth. Deepfakes—hyper-realistic, AI-generated videos and audio recordings—have moved from the realm of science fiction into a potent tool for disinformation, fraud, and reputational destruction. From manipulated speeches of world leaders to fabricated evidence in courtrooms, the technology poses an urgent challenge to governments, corporations, and ordinary citizens alike.

What Are Deepfakes, and Why Now?

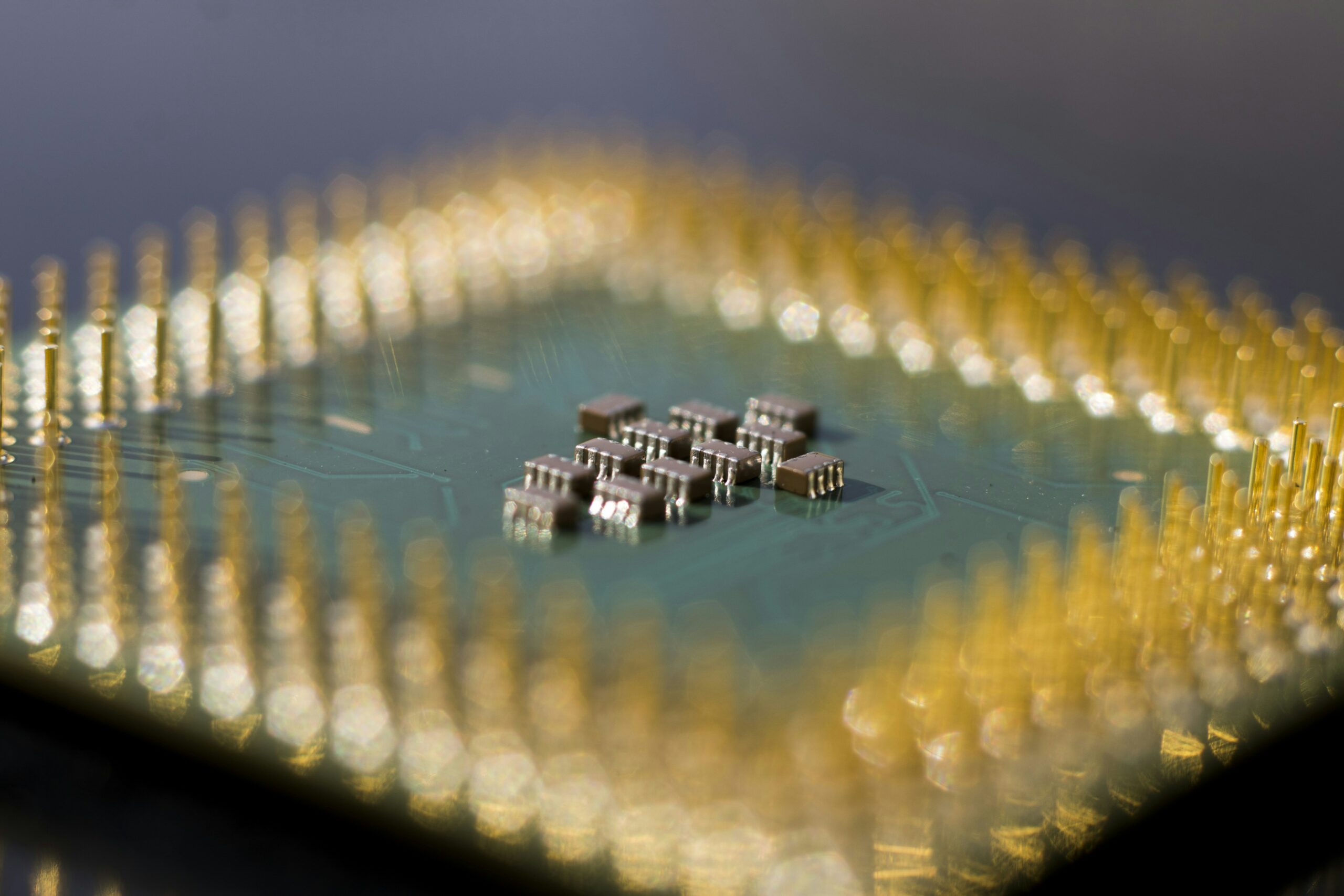

At its core, a deepfake uses a form of artificial intelligence called “deep learning” to superimpose a person’s likeness onto another’s body or to generate entirely synthetic speech. While the concept dates back to 2017, the technology has matured dramatically. Open-source software and cheap computing power now allow virtually anyone with basic skills to create convincing forgeries.

“We’ve crossed a threshold where detection is no longer a technical arms race we can win,” said Dr. Li Wei, a researcher at the Centre for Digital Ethics at Oxford University. “The quality is now good enough that even experts need forensic tools to spot a fake.”

The implications are staggering. In 2023, a synthetic audio clip of a Ukrainian official purportedly surrendering to Russian forces circulated online for hours before being debunked. Earlier this year, a finance worker in Hong Kong was tricked into transferring $25 million after attending a video call with what appeared to be the company’s CFO—actually a deepfake recreation of his voice and face.

The Human Cost Behind the Pixels

Beyond geopolitical manipulation, the technology is inflicting real damage on private lives. Sarah Mitchell, a high school teacher in Ohio, discovered last month that a former student had used a free app to create and share a sexually explicit deepfake video featuring her face. “I felt violated in a way I cannot describe,” Mitchell told the BBC. “It wasn’t just a rumour—it was visual proof of something that never happened.”

Such cases are on the rise. According to a 2024 report by the Cyber Civil Rights Initiative, 96% of all deepfake content online is non-consensual pornography, overwhelmingly targeting women and girls. Legal frameworks have struggled to keep pace. Only seven U.S. states have comprehensive laws criminalizing the creation and distribution of non-consensual deepfake pornography.

A Fragmented Regulatory Landscape

Governments are now scrambling to respond. The European Union’s AI Act, set to take full effect in 2026, will require all synthetic content to be clearly labelled. In the United Kingdom, the Online Safety Act now criminalises the sharing of deepfake intimate images, though enforcement remains patchy. Meanwhile, China has imposed some of the world’s strictest rules, mandating that all AI-generated content be watermarked and traceable.

Yet critics argue these measures are reactive, not preventive. “Legislation can only punish after the damage is done,” notes Senator Maria Gonzalez, who co-sponsored a bipartisan U.S. bill to fund detection research. “We need a proactive approach: digital literacy in schools, robust authentication protocols, and a duty of care from tech platforms.”

What Individuals Can Do

While the problem feels vast, concrete steps exist for the average person. Security experts recommend:

- Enable multi-factor authentication on all financial and social media accounts to prevent account takeovers used to spread fakes.

- Use verification tools like Microsoft’s Video Authenticator or the open-source tool Deepware Scanner before sharing suspicious clips.

- Demand provenance standards, such as the C2PA (Coalition for Content Provenance and Authenticity) metadata that tracks a piece of media’s origin.

The Road Ahead

The deepfake crisis is not merely a technological problem; it is a societal reckoning with the nature of evidence and trust in the digital age. As detection tools improve, so too will the forgeries. The next frontier, experts warn, will be real-time deepfakes—live impersonations during video calls that cannot be distinguished from reality.

Without a coordinated global response—combining law, education, and accountable design—the very concept of recorded truth may become a relic of the past. For now, the best defence remains a healthy scepticism, a well-informed public, and the understanding that not everything we see is real.